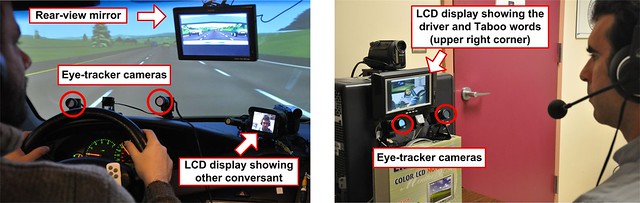

Our short paper [1] on using changes in pupil size diameter to estimate cognitive load was accepted to the Eye Tracking Research and Applications 2010 (ETRA 2010) conference. The lead author is Oszkar Palinko and the co-authors are my PhD student Alex Shyrokov, my OHSU collaborator Peter Heeman and me.

Our short paper [1] on using changes in pupil size diameter to estimate cognitive load was accepted to the Eye Tracking Research and Applications 2010 (ETRA 2010) conference. The lead author is Oszkar Palinko and the co-authors are my PhD student Alex Shyrokov, my OHSU collaborator Peter Heeman and me.

In previous experiments in our lab we have concentrated on performance measures to evaluate the effects of secondary tasks on the driver. Secondary tasks are those performed in addition to driving, e.g. interacting with a personal navigation device. However, as Jackson Beatty has shown, when people’s cognitive load increases their pupils dilate [2]. This fascinating phenomenon provides a physiological measure of cognitive load. Why is it important to have multiple measures of cognitive load? As Christopher Wickens points out [3] this allows us to avoid circular arguments such as “… saying that a task interferes more because of its higher resource demand, and its resource demand is inferred to be higher because of its greater interference.”

We found that in a driving simulator-based experiment that was conducted by Alex, performance-based and pupillometry-based (that is a physiological) cognitive load measures show high correspondence for tasks that lasted tens of seconds. In other words, both driving performance measures and pupil size changes appear to track cognitive load changes. In the experiment the driver is involved in two spoken tasks in addition to the manual-visual task of driving. We hypothesize that different parts of these two spoken tasks present different levels of cognitive load for the driver. Our measurements of driving performance and pupil diameter changes appear to confirm the hypothesis. Additionally, we introduced a new pupillometry-based cognitive load measure that shows promise for tracking changes in cognitive load on time scales of several seconds.

In Alex’s experiment one of the spoken tasks required participants to ask and answer yes/no questions. We hypothesize that different phases of this task also present different levels of cognitive load to the driver. Will this be evident in driving performance and pupillometric data? We hope to find out soon!

References

[1] Oskar Palinko, Andrew L. Kun, Alexander Shyrokov, Peter Heeman, “Estimating Cognitive Load Using Remote Eye Tracking in a Driving Simulator,” ETRA 2010

[2] Jackson Beatty, “Task-evoked pupillary responses, processing load, and the structure of processing resources,” Psychological Bulletin. Vol. 91(2), Mar 1982, 276-292

[3] Christopher D. Wickens, “Multiple resources and performance prediction,” Theoretical Issues in Ergonomic Science, 2002, Vol. 3, No. 2, 159-177