On Tuesday (August 3, 2010) I attended SpeechTEK 2010. I had a chance to see several really interesting talks including the lunch keynote by Zig Serafin, General Manager, Speech at Microsoft. He and two associates discussed, among other topics, the upcoming release of the Windows 7 phone and of the Kinect for Xbox 360 (formerly Project Natal). We also saw successful live demonstrations of both of these technologies.

On Tuesday (August 3, 2010) I attended SpeechTEK 2010. I had a chance to see several really interesting talks including the lunch keynote by Zig Serafin, General Manager, Speech at Microsoft. He and two associates discussed, among other topics, the upcoming release of the Windows 7 phone and of the Kinect for Xbox 360 (formerly Project Natal). We also saw successful live demonstrations of both of these technologies.

One of Zig’s associates to take the stage was Larry Heck, Chief Scientist, Speech at Microsoft. Larry believes that there are three areas of research and development that will combine to make speech a part of everyday interactions with computers. First, the advent of ubiquitous computing and the need for natural user interfaces (NUIs) means that we cannot keep relying on GUIs and keyboards for many of our computing needs. Second, cloud computing makes it possible to gather rich data to train speech systems. Finally, with advances in speech technology we can expect to see search move beyond typing keywords (which is what we do today sitting at our PCs) to conversational queries (which is what people are starting to do on mobile phones).

I attended four other talks with topics relevant to my research. Brigitte Richardson discussed her work on Ford’s Sync. It’s exciting to hear that Ford is coming out with an SDK that will allow integrating devices with Sync. This appears to be a similar approach to ours at Project54 – we also provide an SDK which can be used to write software for the Project54 system [1]. Eduardo Olvera of Nuance discussed the differences and similarities between designing interfaces for speech interaction and those for interaction on a small form factor screen. Karen Kaushansky of TellMe discussed similar issues focusing on customer care. Finally, Kathy Lee, also of TellMe, discussed her work on a diary study exploring when people are willing to talk to their phones. This work reminded me of an experiment in which Ronkainen et al. asked participants to rate the social acceptability of mobile phone usage scenarios they viewed in video clips [2].

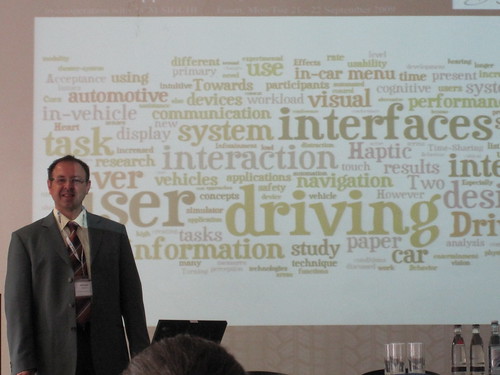

I also had a chance to give a talk reviewing some of the results of my collaboration with Tim Paek of Microsoft Research. Specifically, I discussed the effects of speech recognition accuracy and PTT button usage on driving performance [3] and the use of voice-only instructions for personal navigation devices [4]. The talk was very well received by the audience of over 25, with many follow-up questions. Tim also gave this talk earlier this year at Mobile Voice 2010.

I also had a chance to give a talk reviewing some of the results of my collaboration with Tim Paek of Microsoft Research. Specifically, I discussed the effects of speech recognition accuracy and PTT button usage on driving performance [3] and the use of voice-only instructions for personal navigation devices [4]. The talk was very well received by the audience of over 25, with many follow-up questions. Tim also gave this talk earlier this year at Mobile Voice 2010.

For pictures from SpeechTEK 2010 visit my Flickr page.

References

[1] Andrew L. Kun, W. Thomas Miller, III, Albert Pelhe and Richard L. Lynch, “A software architecture supporting in-car speech interaction,” IEEE Intelligent Vehicles Symposium 2004

[2] Sami Ronkainen, Jonna Häkkilä, Saana Kaleva, Ashley Colley, Jukka Linjama, “Tap Input as an Embedded Interaction Method for Mobile Devices,” TEI 2007

[3] Andrew L. Kun, Tim Paek, Zeljko Medenica, “The Effect of Speech Interface Accuracy on Driving Performance,” Interspeech 2007

[4] Andrew L. Kun, Tim Paek, Zeljko Medenica, Nemanja Memarovic, Oskar Palinko, “Glancing at Personal Navigation Devices Can Affect Driving: Experimental Results and Design Implications,” Automotive UI 2009

I also had a chance to give a talk reviewing some of the results of my collaboration with

I also had a chance to give a talk reviewing some of the results of my collaboration with