Today was my first lecture in BME‘s Autonomous Robots and Vehicles Lab (Autonóm robotok és járművek laboratórium). This lab is lead by Bálint Kiss, who is my host during my Fulbright scholarship at in Hungary.

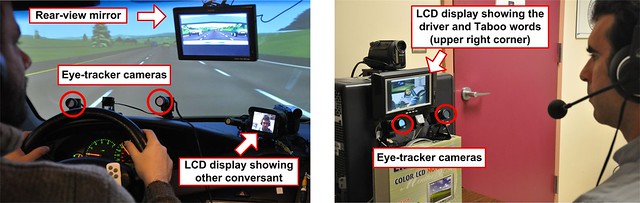

Today’s lecture covered the use of eye trackers in designing human-computer interaction. I talked about our work on in-vehicle human-computer interaction, and drew parallels to human-robot interaction. Tomorrow I’ll introduce the class to our Seeing Machines eye tracker, and in the coming weeks I’ll run a number of lab sections in which the students will conduct short experiments in eye tracking and pupil diameter measurement.

If you speak Hungarian, here’s the overview of today’s lecture (I’m thrilled to be teaching in Hungarian):

Szemkövetők használata az ember-gép interakció értékelésében

A University of New Hampshire kutatói több mint egy évtizede foglalkoznak a járműveken belüli ember-gép interfészekkel. Ez az előadás először egy rövid áttekintést nyújt a rendőr járművekre tervezett Project54 rendszer fejlesztéséről és telepítéséről. A rendszer különböző modalitású felhasználói felületeket biztosít, beleértve a beszéd modalitást. A továbbiakban az előadás beszámol közelmúltban végzett autóvezetés-szimulációs kísérletekről, amelyekben a szimulátor és egy szemkövető adatai alapján becsültük a vezető kognitív terhelését, vezetési teljesítményét, és vizuális figyelmét a külső világra.

Az előadás által a hallgatók betekintést nyernek a szemkövetők használatába az ember-gép interakció értékelésében és tervezésében. Az ember-gép interakció pedig egy központi probléma az autonóm robotok sikeres telepítésében, hiszen az autonóm robotokat nem csak szakértők fogják használni. Ellenkezőleg, ezek a robotok a társadalom minden részében felhasználókra találnak majd. A robotok ilyen széleskörű telepítése csak akkor lehet sikeres, ha az ember-gép interakció elfogadható a felhasználók számára.