During the fall 2016 semester I will be teaching a course exploring the fundamentals of ubiquitous (or pervasive) computing. The course is listed as ECE 724/824 Ubiquitous Computing Fundamentals. This is the fourth time this course will run – the first time was in 2010.

Ubicomp certificate

The course is a required course for the new Graduate Certificate in Ubiquitous Computing.

Why ubiquitous computing?

We have entered the third era of modern computing. This era is defined by computing devices that are embedded in everyday objects and become part of everyday activities. These devices are also connected to other devices or networks in an effort to share or gather information. Ubiquitous computing is a multidisciplinary field of study that explores the design and implementation of such embedded, networked computing devices.

The course in a nutshell

The Ubiquitous Computing Fundamentals course has two major thrusts:

1. Lectures: Lectures introducing fundamental material from papers, a textbook edited by John Krumm, and many research videos. Topics covered will include system software for supporting ubicomp, human-computer interaction in ubicomp systems, privacy issues, context awareness, and location-based services.

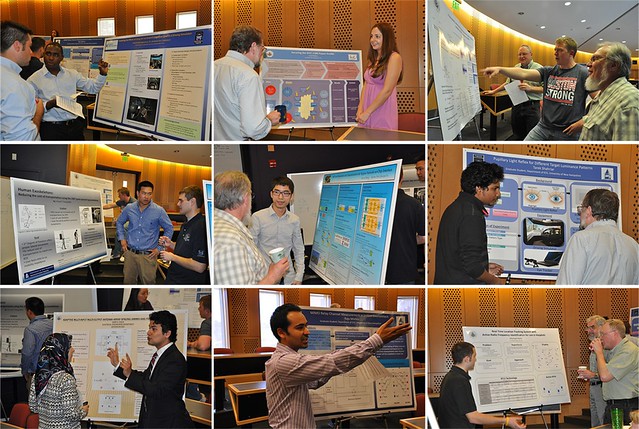

2. Projects: Following a project requirements document, students (teams or individuals) will first select topics, with the guidance of the instructor. They will then prepare a proposal, complete the project, and report on it at the end of the semester through a written document and an oral presentation. Videos are encouraged.

Two past projects

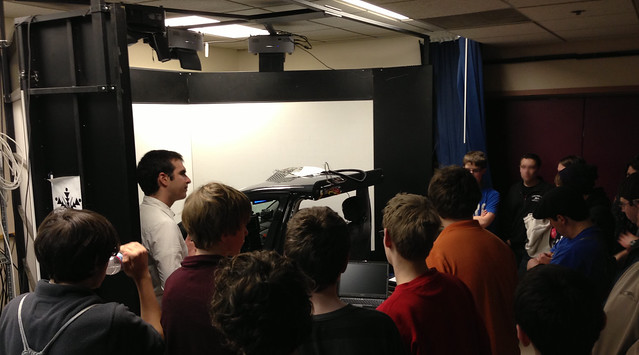

Here are two videos from 2010 to give you a taste for what a ubicomp project might look like.

Video 1: Data entry using handheld computers vs. paper

Video 2: Exploring group interaction with a multi-touch table

Who is this course for?

Students who will most benefit from the course are seniors, graduate students, and professionals with an EE, CompE, CS and IT background.

Organizational details

The course will run online asynchronously. There will be no in-class meetings.

For grading and such see the ECE900-Online-Syllabus.

Questions?

Send email to andrew DOT kun AT unh DOT edu.